Decision Training Case Study, Part 1

NHL Referees and Linesmen

A decision training case study in hockey

September 1, 2023

Teaching a Standard for Officiating Decisions

Decision training for new referees and linesmen

The National Hockey League (NHL) wanted to standardize the way that calls are made in games. And this was our first decision training case study in hockey. NHL contracted deCervo to make a new decision training program to attack this problem. Our goal from this program was to standardize the decisions the referees and linesmen made on the ice. For instance, icing or tripping are hard penalty calls to make. But using carefully chosen videos, deCervo layered a curriculum on top of these raw videos to make a program that would teach the new referees and linesmen how to make these penalty calls. Below, we recap highlights from the first 4 seasons of doing this work with the NHL.

Improving Decision Ability with Training

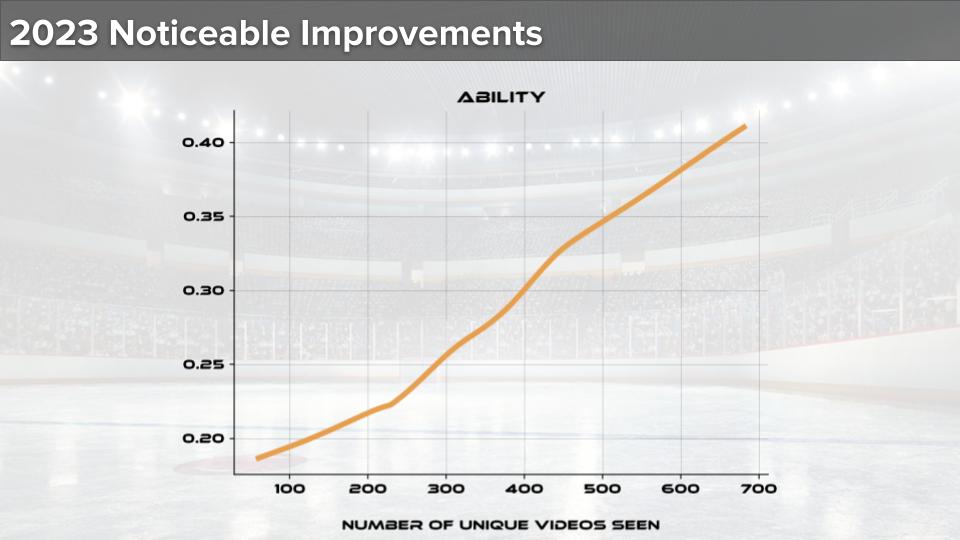

uCALL is the name of the decision training app we made for the NHL (learn more about custom decision training apps here). We track referees’ and linesmen’s capability in uCALL with something called the “ability score.” The ability score is on the the y-axis of the above graph. uCALL adjusts which plays a referee/linesman sees by his ability score. If he has a high score, he sees harder plays to make a penalty call on. With a low score, he sees easier plays.

We begin the season at the NHL Exposure Combine. The NHL puts on this event to recruit and train new officials (read about our 2018 work at this event here). At the Combine, we give everyone a baseline assessment. This is their initial ability score. From that (lower) score, we target each user with penalty call videos of increasing difficulty. As they progress, we find what you see in the above graph. With more unique videos (or plays), their ability score increases. As a result, we increase their capability as a referee/linesman.

Penalties By Difficulty Score Drive Decision Training

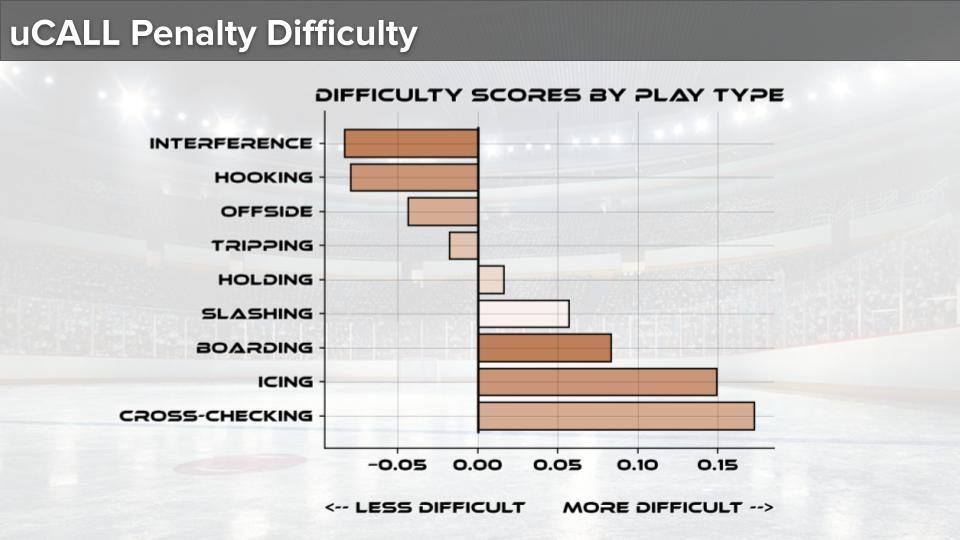

uCALL matches a new penalty call to each user quite effectively. This allows uCALL to make better referees/linesmen. uCALL grades every penalty video against whether the NHL Officiating Department believes there to have been a penalty. As more referees/linesmen see these videos, uCALL learns which videos are harder than others to make the penalty call on.

There are numerous reasons why a penalty may be easy or difficult to call. The positioning could block something a player does that would draw a penalty. Or the play could happen so quickly the referee/linesman misses the relevant action. Whatever the reason, uCALL marks how difficult or easy a play-video is to call. This difficulty is only based on how often it is decided correctly, i.e., according to the NHL rules.

The figure above shows that some plays are easier to call penalty on than others. For instance, cross-checking is the most difficult for these training referees/linesmen. And interference penalties are the least difficult. From interference to cross-checking, there are many gradations of difficulty in-between. And these gradations allow uCALL match the right play at the right time to the user’s ability score. The goal always is to improve the user’s ability score. And that’s just what we saw in the graph above this one.

How Long Until Decision Training Is Optimized?

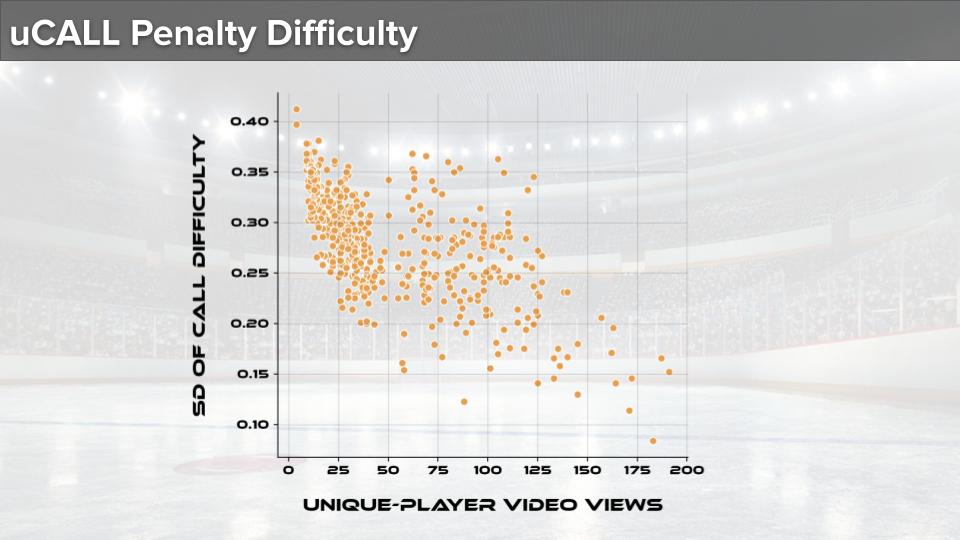

The previous section showed us how the difficulty score of a video is a driving factor in improving referees/linesmen in uCALL. But could the NHL have expected this capability in the first year of using uCALL back in 2018? The answer is: no, the above graphs shows the reason why.

This graph shows the “standard deviation” (see more about this way of summarizing data here) of all video difficulty scores in uCALL. Importantly, it shows these standard deviations as a function of how many unique (or new) videos referees/linesmen have worked in uCALL. For instance, to the left, there are a low number of videos seen (this is early in the season when they begin usage). And the standard deviation (or spread) in difficulty scores is quite high. Conversely, to the right, there are a high number of videos seen (this is late in the season after much usage). And the standard deviation in difficulty scores is much lower (almost 2x-3x lower).

As the difficulty scores’ standard deviation drops, uCALL gets better at matching a given penalty video to a referee/linesman’s capability. Just like many mobile technologies, uCALL gets better for an individual user as he uses it more and as more people use it (see other examples of such technologies here).